Random Forest, Adaboost & Decision Trees in Machine Learning

Video: .mp4 (1280x720, 30 fps(r)) | Audio: aac, 44100 Hz, 2ch | Size: 1.82 GB

Genre: eLearning Video | Duration: 22 lectures (2 hour, 58 mins) | Language: English

Learn Machine Learning on Random Forest, Adaboost, Decision Trees

Video: .mp4 (1280x720, 30 fps(r)) | Audio: aac, 44100 Hz, 2ch | Size: 1.82 GB

Genre: eLearning Video | Duration: 22 lectures (2 hour, 58 mins) | Language: English

Learn Machine Learning on Random Forest, Adaboost, Decision Trees

What you'll learn

Knowing how to write a Python code for Random Forests.

Implementing AdaBoost using Python.

Having a solid knowledge about decision trees and how to extend it further with Random Forests.

Understanding the Machine Learning main problems and how to solve them.

Understanding the differences between Bagging and Boosting.

Reviewing the basic terminology for any machine learning algorithm.

Requirements

Python basics

NumPy, Matplotlib, Sci-Kit Learn

Basic Probability and Statistics

Description

In recent years, we've seen a resurgence in AI, or artificial intelligence, and machine learning.

Machine learning has led to some amazing results, like being able to analyze medical images and predict diseases on-par with human experts.

Google's AlphaGo program was able to beat a world champion in the strategy game go using deep reinforcement learning.

Machine learning is even being used to program self driving cars, which is going to change the automotive industry forever. Imagine a world with drastically reduced car accidents, simply by removing the element of human error.

Google famously announced that they are now "machine learning first", and companies like NVIDIA and Amazon have followed suit, and this is what's going to drive innovation in the coming years.

Machine learning is embedded into all sorts of different products, and it's used in many industries, like finance, online advertising, medicine, and robotics.

It is a widely applicable tool that will benefit you no matter what industry you're in, and it will also open up a ton of career opportunities once you get good.

Machine learning also raises some philosophical questions. Are we building a machine that can think? What does it mean to be conscious? Will computers one day take over the world?

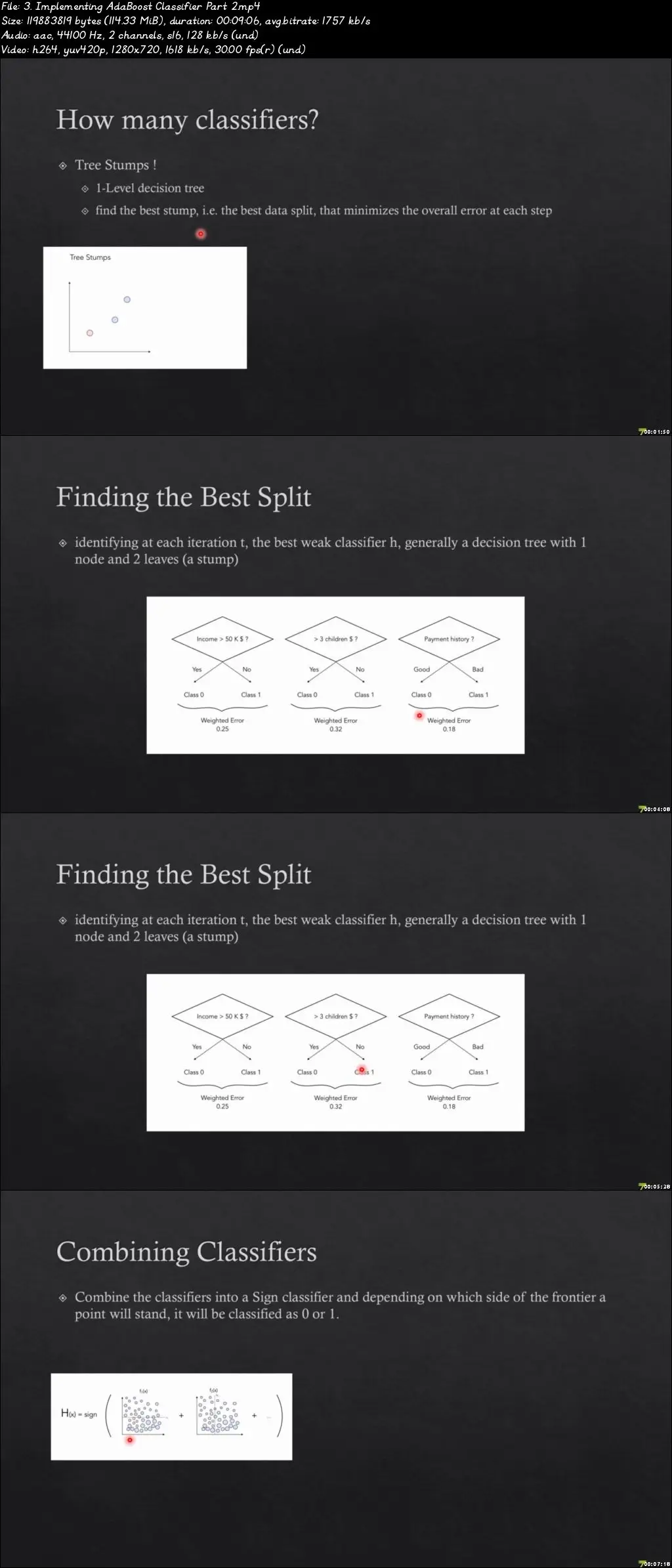

This course is all about ensemble methods.

In particular, we will study the Random Forest and AdaBoost algorithms in detail.

To motivate our discussion, we will learn about an important topic in statistical learning, the bias-variance trade-off. We will then study the bootstrap technique and bagging as methods for reducing both bias and variance simultaneously.

All the materials for this course are FREE. You can download and install Python, NumPy, and SciPy with simple commands on Windows, Linux, or Mac.

This course focuses on "how to build and understand", not just "how to use". Anyone can learn to use an API in 15 minutes after reading some documentation. It's not about "remembering facts", it's about "seeing for yourself" via experimentation. It will teach you how to visualize what's happening in the model internally. If you want more than just a superficial look at machine learning models, this course is for you.

Who this course is for:

Aspiring Data Scientists

Artificial Intelligence/Machine Learning/ Engineers

Student's/Professionals who have some basic knowledge in Machine Learning and want to know about the powerful models like Random Forest, AdaBoost

Entrepreneurs, professionals, and students who want to learn, and apply data science and machine learning to their work